Twitter says it is deploying new insurance policies that the social community hopes preserve tempo with the state of affect operations and disinformation right this moment.

Jeff Chiu/AP

conceal caption

toggle caption

Jeff Chiu/AP

Twitter says it is deploying new insurance policies that the social community hopes preserve tempo with the state of affect operations and disinformation right this moment.

Jeff Chiu/AP

Twitter is deploying new options on Thursday that it says will preserve tempo with disinformation and affect operations concentrating on the 2020 election.

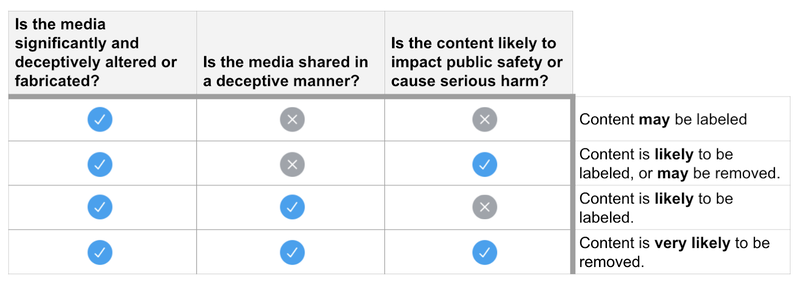

A new policy on “synthetic and manipulated media,” makes an attempt to flag and supply better context for content material that the platform believes to have been “considerably and deceptively altered or fabricated.”

Beginning Thursday, when customers scroll by posts, they could start seeing Twitter’s new labeling system — a blue exclamation level and the phrases “manipulated media” beneath a video, picture or different media that the platform believes to have been tampered with or deceptively shared.

This might embody deepfakes — excessive tech movies that depict occasions that by no means occurred — or “cheepfakes” made with low-tech modifying, like dashing up a video or slowing it down.

Content material moderators will use this standards to find out whether or not Tweets and media must be labeled or eliminated.

Fb

conceal caption

toggle caption

Fb

Twitter’s head of website integrity, Yoel Roth, advised NPR that moderators shall be watching for 2 issues:

“We’re in search of proof that the video or picture or audio have been considerably altered in a means that adjustments their that means,” Roth stated. “Within the occasion that we discover proof that the media was considerably modified, the subsequent query we ask ourselves is, is it being shared on Twitter in a means that’s misleading or deceptive?”

If the media has been modified to the extent that it may “impression public security or trigger severe hurt,” then Twitter says it would take away the content material totally.

Twitter says this might embody threats to the bodily security of an individual or a gaggle or a person’s capability to specific their human rights corresponding to collaborating in elections.

When customers faucet on newly labelled posts, they’re going to present “knowledgeable context” explaining why the content material is not reliable.

If somebody tries to retweet or “like” the content material, they’re going to obtain a message asking in the event that they actually wish to amplify an merchandise that’s prone to mislead others.

Twitter says they could additionally cut back the visibility of the deceptive content material.

We all know that some Tweets embody manipulated photographs or movies that may trigger individuals hurt. Right now we’re introducing a brand new rule and a label that can handle this and provides individuals extra context round these Tweets pic.twitter.com/P1ThCsirZ4

— Twitter Security (@TwitterSafety) February 4, 2020

Evolution of interference

Twitter was caught “flat-footed” by the lively measures that focused the U.S. election in 2016, Roth stated. Throughout that yr, affect specialists used faux accounts throughout social media to unfold disinformation and amplify discord.

That work by no means actually stopped, nationwide safety officers have stated, and officers warned forward of Tremendous Tuesday’s primaries that it continues at a relatively low however regular state.

Roth advised NPR that whereas Twitter has not traced particular tweets concerning the 2020 marketing campaign again to Russia, it’s making an attempt to use what it realized from the final presidential race, together with about using faux personae.

“These could be accounts that have been pretending to be Individuals to attempt to affect sure components of the dialog,” Roth stated in an interview with NPR’s Ari Shapiro.

Whereas this tactic has remained part of Russia’s toolkit, the playbook additionally has continued to broaden.

In 2018, Russia did not simply intrude within the election, influence-mongers tried to make it appear to be there was extra interference than there really was.

“We noticed exercise that we consider to have been linked with the Russian Web Analysis Company that was particularly concentrating on journalists in an try to persuade them that there had been giant scale exercise on the platform that did not really occur,” Roth stated.

This time round, slightly than create its personal messaging, Russia and different international actors are amplifying the voices of actual Individuals, Roth stated. By re-sharing excessive — albeit genuine content material — they’re in a position to manipulate the platform with out introducing any further misinformation.

“I feel in 2020, we’re dealing with a very divisive political second right here in the US and makes an attempt to capitalize on these divisions amongst Individuals appear to be the place malicious actors are heading,” Roth stated.

The social community has studied faux accounts and coordinated manipulation efforts and have put its new insurance policies to the take a look at throughout elections within the European Union and India.

Roth says Twitter has additionally constructed a group of consultants, together with group moderators and companions in authorities and academia. One other precedence is transparency.

When the platform uncovers “state-backed data operations” it shares the information publicly.

Twitter feeds will begin to look totally different because the social community begins flagging posts that it thinks are a part of broad deception campaigns.

Amr Alfiky/AP

conceal caption

toggle caption

Amr Alfiky/AP

Twitter feeds will begin to look totally different because the social community begins flagging posts that it thinks are a part of broad deception campaigns.

Amr Alfiky/AP

Weaponizing deception

Overseas actors aren’t the one ones prone to be impacted by this new coverage. Misinformation may come from American political campaigns making an attempt to make use of social media to their benefit.

Roth says he is already seen American 2020 candidates make use of questionable ways to get voters’ consideration.

“We have seen … accounts which might be pretending to be compromised. In order that they’ll kind of faux that they have been hacked after which share content material that they could in any other case not have,” Roth stated, together with “giant scale makes an attempt to mobilize volunteers to share content material on the service.”

Whereas neither of those actions essentially violate Twitter’s insurance policies, there are moments the place they’ll cross the road.

For instance, former New York Metropolis Mayor Michael Bloomberg’s presidential marketing campaign employed tons of of short-term staff to publish marketing campaign messages on Fb, Twitter and Instagram.

Twitter determined to droop dozens of those pro-Bloomberg accounts. Roth says their choice was not primarily based on the truth that these people have been being paid to publish content material.

“Our focus each time we’re imposing our insurance policies is to take a look at the conduct that the accounts are engaged in, not who we expect is behind them or what their motivations have been, as a result of in most cases we do not know,” Roth stated.

Twitter decided that the suspended accounts have been “engaged in spam.”

If a lot of individuals organically determine to publish the identical message or comparable messages on the similar time, that is not prone to qualify as spam, Roth stated.

But when particular person customers create a number of accounts only for the aim of tweeting a particular message, then it does. They’re over amplifying their voice in a means that constitutes manipulation.

In accordance with an NPR/PBS NewsHour/Marist ballot, 82 p.c of Individuals assume they may learn deceptive data on social media this election. Three quarters stated they do not belief tech corporations to stop their platforms from being misused for election interference.

Roth stated the investments Twitter has made since 2018 to guard the “integrity of the platform” and make sure the safety of its customers has been vital — however he referred to as {that a} balancing act.

For this reason Twitter’s new coverage does not take away all manipulated content material. To fight false data, Roth says Twitter is making an attempt to spotlight data from reliable sources.

For instance, whereas Twitter is not eradicating all false data concerning the coronavirus, it is ensuring that data from the Facilities for Illness Management and Prevention is displayed prominently.

“I feel a platform has a accountability to create an area the place credible voices can attain audiences which might be fascinated by understanding what they must say,” Roth stated.

“That does not imply that we do not enable dialogue of those points all through the product, however we wish to be sure that if you first come to Twitter on the data that you simply see in our product goes to come back from authoritative sources.”

The post Twitter Vows That As Disinformation Tactics Change, Its Policies Will Keep Pace : NPR appeared first on Down The Middle News.

source https://downthemiddlenews.com/twitter-vows-that-as-disinformation-tactics-change-its-policies-will-keep-pace-npr/

No comments:

Post a Comment